BABY UPDATE. We are still in a holding pattern, past our due date by two days and waiting for something to happen. Those who know me personally will know that my and Amy’s baby was never going to be anything but dramatic, so this was to be expected. The midwives don’t seem concerned and we’re sure it will happen any moment. I managed to sneak the writing of this post in on Saturday morning just in case it’s later today! Now without further ado…

DROP EVERYTHING AND GO WATCH “EVERYTHING, EVERYWHERE, ALL THE TIME”. It’s currently available for purchase on such platforms as Apple TV and Amazon Prime. I know it feels strange to pay a la carte for content in the streaming age, but this movie is worth it. Quite possibly the best film I’ve seen in the last five years.

If anyone saw “Swiss Army Man” (Which is also amazing and you should see if you haven’t—Daniel Radcliffe plays a farting corpse that Paul Dano uses to escape from being stranded on an island, with all the weirdness that implies, yet by the end of the movie I was crying) this movie is from the same directors and has somewhat of the same tone.

The basic premise is that Michelle Yeoh (who should absolutely get an Oscar nom for this role) is a downtrodden immigrant daughter running a failing laundry and fighting with her entire family, who turns out to be the potential savior of the multiverse.

The resulting film is equal parts action, weird jokes, family saga, love story, redemption arc, and sci fi puzzler. Bizarre jokes in the first act turn into heart-wrenching dramatic subplots by the third act. Michelle Yeoh wears more costumes than should exist in all of Hollywood as she burns through universes alternately searching for and escaping from the malevolent force that threatens to destroy them all.

This is the type of film that gives one hope for independent cinema in general. It’s a movie for adults that isn’t about dealing with grief or loss or alcoholism. It’s simultaneously adult and yet *fun*. Run don’t walk to your couch, pay $20 to buy this epic masterpiece, and put your phone down for a couple hours to give this film your full attention. You won’t regret it.

SLOWER PACED BUT ALSO STILL INTERESTING.

This Ted Chiang interview doesn’t need a lot of explanation, he’s just the greatest sci fi writer working today in my opinion, and also an incredibly thoughtful and learned person in general. I find this interviewer a little annoying, but get past that and Chiang is worth listening to at any time in any format.

MORE FUN WITH DALL-E-2.

I have continued to (obsessively) play with the A.I. art generator that I brought to your attention two weeks ago. I really feel like I’m exploring the contours of the A.I. by interacting with it and finding new ways to prompt it—you can really “feel it thinking” so to speak. DALL-E-2, like any artist, has principles and tendencies, strengths and weaknesses, and the very rapid A-B testing allowed by the fact that it can generate a picture in 10 seconds makes it feel almost like an artistic musical instrument that I am learning to play.

DALL-E-2 definitely has trouble with things that are TOO specific or unusual, and tends to get great results from art styles that allow for more abstraction and interpretation. Example: I wanted to get an image of a zoom call window in grid format with each participant in their own little window, where the participants were dogs instead of human. It can’t really do that, and instead I got a bunch of this—

—which is hilarious and awesome but not what I wanted, and I wasn’t able to get any closer than this to my specific idea despite dozens of tries. However, more abstracted concepts (often specific subjects and styles but non-specific viewpoint and frame) tend to yield great results even on a first try. All three of these were the first attempt for their prompt tree—

One weird thing I discovered that improves the quality of DALL-E-2’s output in many cases is to restrict its color palette. Color is a really concrete parameter (meaning A.I. is basically perfect at identifying blue things as blue, etc), and so palette restriction is one of the instructions it follows most consistently and well. The results are striking—

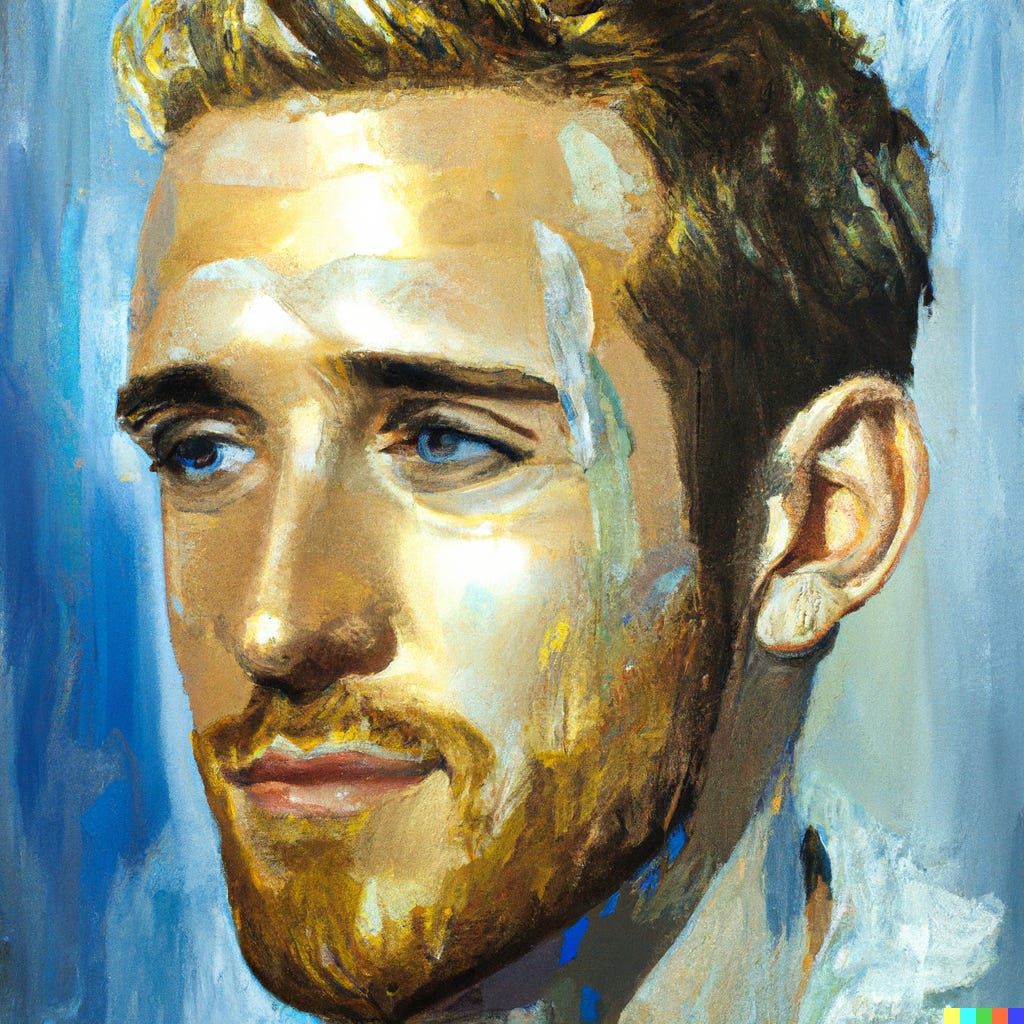

Why this works so well, in part, is that restricting a color palette makes for striking images in general, but for DALL-E-2 it actually seems to make the images sharper and clearer. For example, it really helps it perform better in face generation—

My hypothesis is that the main problem DALL-E-2 faces in image design is an oversaturation of possibility—when it fails it often puts too much into a frame, each word of the sentence interpreted literally and crowded together into a disjointed result—and thus restricting its options along any concrete parameter is actually just per se helpful.

This is actually also often the case with human creativity. It can be easier to generate ideas from a prompt than in a vacuum. “Think of a random word” is harder and requires more thought than “think of a color”, which you probably just did automatically when you read that clause. One more example because it’s so cool—

The last interesting thing I discovered about DALL-E-2 in this last fortnight is that it can iterate pictures that you upload! So of course, I uploaded pictures of my dogs and started messing around. I have no idea how it does this or what the criteria are, there’s no prompt or explanation, you just upload and then “Generate variations”, and then you get results and you can use any of those to generate even more versions.

So I uploaded this pic—

—and when I generated versions, it turned my Pittie into a weiner dog—

—and then when I generated new versions from that resulting image, the grandbaby of the original image turned one of my weiner dogs into a penguin-duck—

—and I’m not at all sure what this means about our A.I. future, but I haven’t stopped laughing about it since and I just knew I had to share.

Well that’s what I’ve got this week. I will be back next Sunday with another original story, and I’m *really* hoping that by that point I’ll be a father! :) Hope everybody has a great week!