OGWiseman Reports!

DALL-E-3 is here, and it's a great way to understand A.I. as a general phenomenon.

When DALL-E-2—the A.I. image generator—came out, I did a whole post on it. Reread if you so desire, but if you don’t want to, I’ll be referring to some of the highlights in this post on its successor, DALL-E-3, which is now available through Bing.

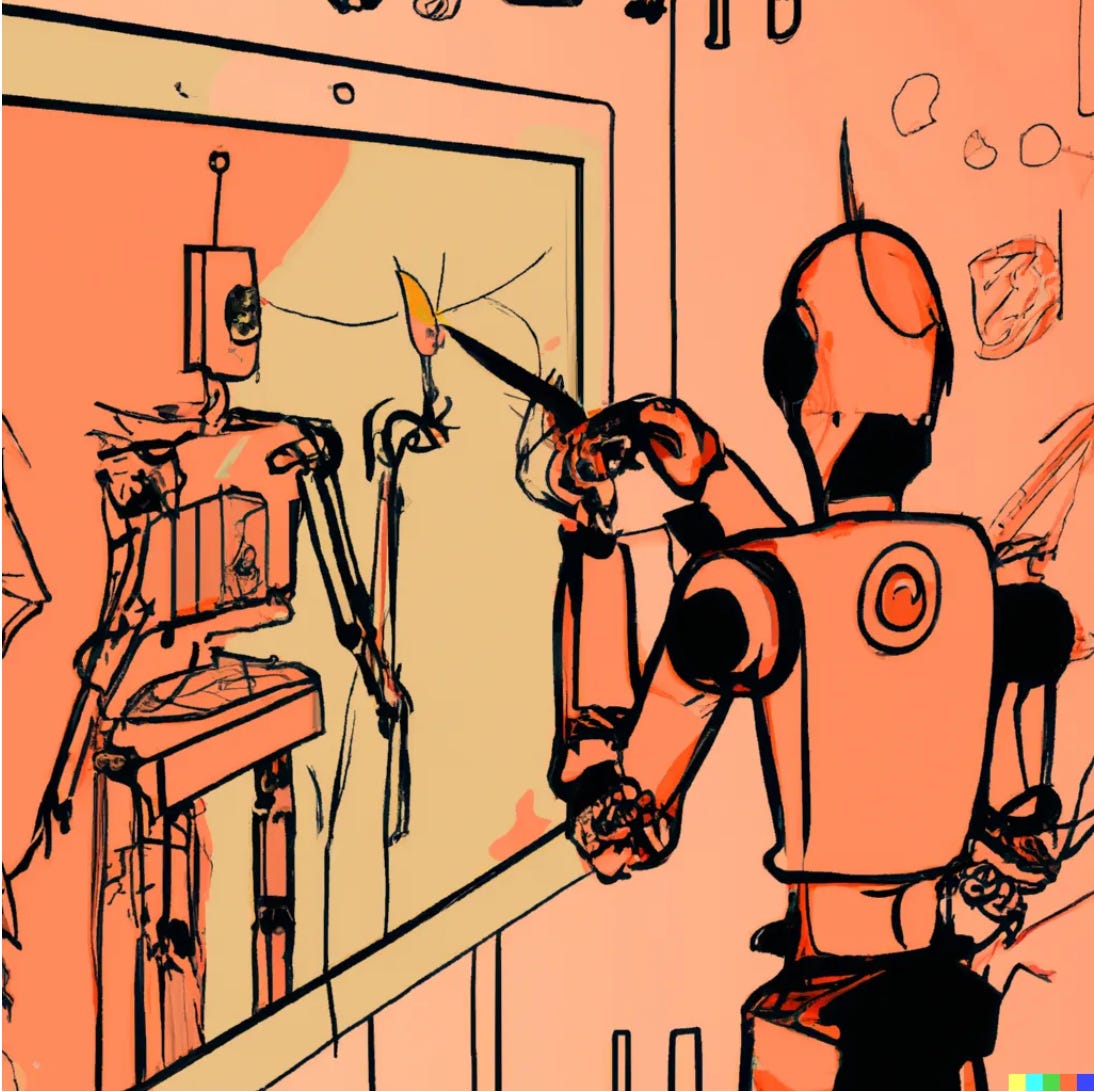

Sometimes, when you write something, the hook is obvious. This is one of those times. Here’s the first prompt I ever gave an A.I. image generator: “A robot painting a portrait of another robot.”

Here’s the best result from DALL-E-2:

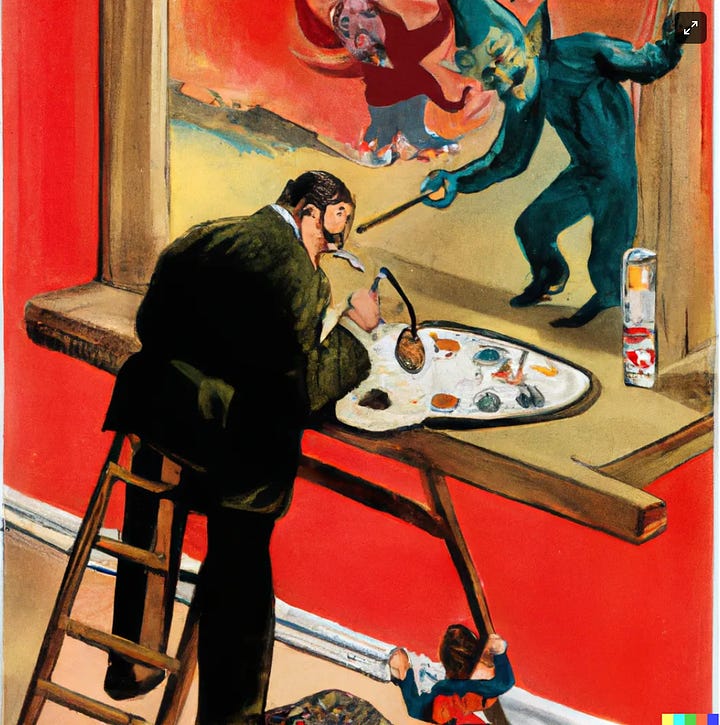

Here’s the exact same prompt, given to DALL-E-3:

Honestly, that could be the whole post. It’s possible to *like* the first image more, of course (I actually do prefer it as a piece of art) and there’s a whole meta-question about whether “do exactly what the prompt says at an amazing technical level every time” is really the best goal when creating art, which depends so often on serendipity, whimsy, and irony. (This is a subject for another essay at another time.)

Here’s what’s not debatable: On a technical level, the second image blows the first out of the water. Artistic technique, prompt-language-interpretation, and everything else, it just owns. There are humans who can do this stuff, but they work on commission and their art hangs in galleries.

Look at the hands. DALL-E-2 had a known weakness in that it didn’t do hands well at all. (I’m told that hands are very difficult for human artists as well, although everything is when you draw like me.) DALL-E-3 hands are flawless, down to the small muscles standing out where they’re used to hold the brush.

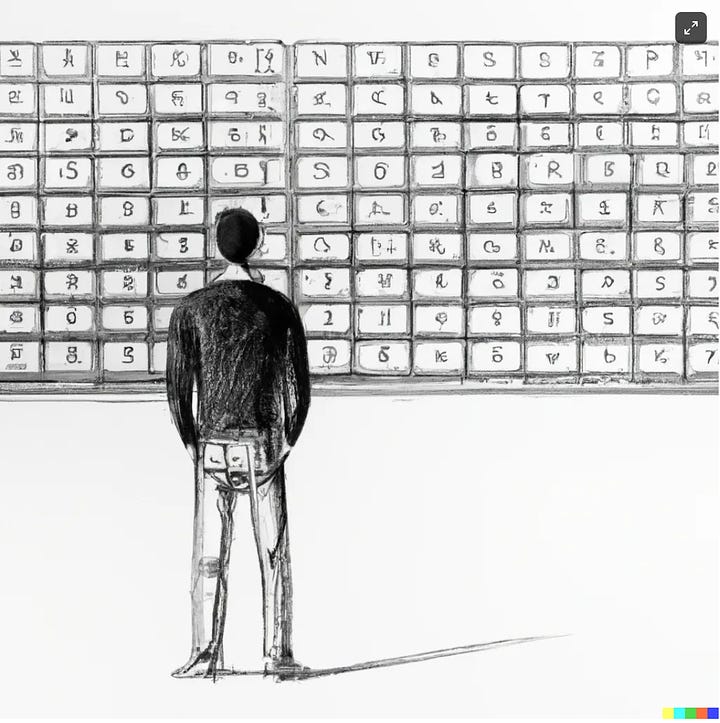

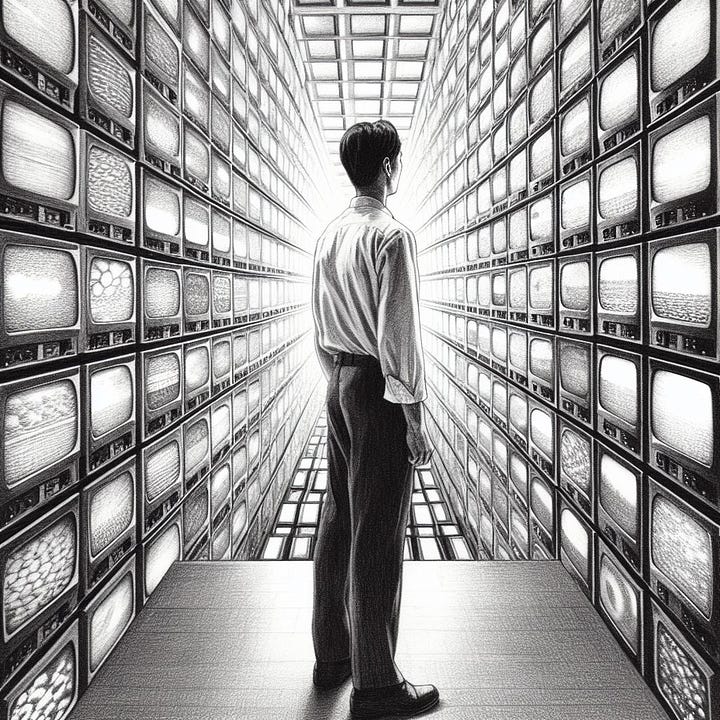

This is perhaps the most outstanding example of this improvement I’ve created so far, but it’s not the only one. As a benchmark, I ran every prompt from my DALL-E-2 post through DALL-E-3. Here’s the best ones, with DALL-E-2 on the left and DALL-E-3 on the right:

What you’re looking at here is an example of non-human, discontinuous, geometric skill improvement. To put it another way, what you’re looking at here is massive cultural and economic change, with computers as the forcing mechanism.

All human learning follows a diminishing-returns paradigm—getting from 0-1 on the 1-100 skill percentile scale is easy—just start doing it at all and you’re basically there. Getting from the 98th to 99t percentile is in some sense “the same amount” of improvement, but it takes way, way, WAY more time and practice to go that distance, and for people without natural talent, 98-99 is probably just impossible.

Now consider the level of improvement evidenced by the images above. It occurred in less than 2 years, with a (relatively) small amount of resources and talent pointed at it. If a human showed that kind of improvement in two years, we’d call them an artistic genius. And that’s not even accounting for the fact that each of those images took the A.I. only a few moments to make!

Some credible experts in the A.I. space predict that we will be training models 1000x (not a typo) as large as this one within three years. The advance from DALL-E-2 to DALL-E-3 was less than 10x, starting from a lower value. Excuse me, my head just exploded, I’ll need to piece it back together before I can continue. Here’s a couple more comparison examples while I do that.

And, if that wasn’t enough, DALL-E-3 is a quality ratchet: It can do a volume of work limited only by the availability of electricity and computer chips, it never loses enthusiasm, and the quality will never go down no matter how many times you run it.

Now it’s true that not all kinds of work are as easy to replace as making art, which has the virtue of allowing errors at a high rate without catastrophic consequences. An A.I. that does legal work, for example, needs a much, much lower error rate than one that draws.

But, looking at these images, it seems clear that the biggest thing that’s happening is the error rate is dropping like a stone. DALL-E-3 is misinterpreting less, and it’s hitting the prompts more thoroughly and exactly. At this point, the error rate is already very low, and with another few versions, it will approach zero.

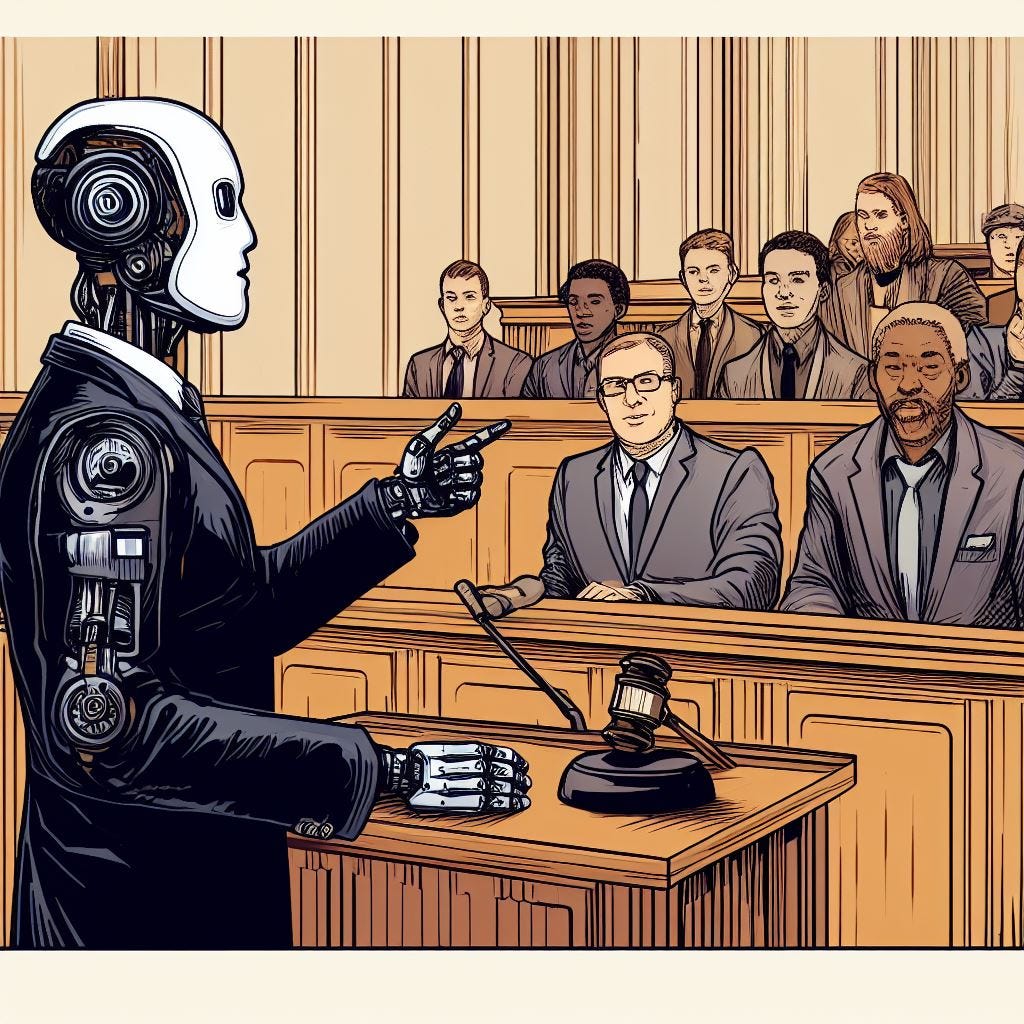

And of course, legal work (for just one example) is in some ways a lot more structured and concrete than art prompts. Cases have facts. There is a limited volume of prior case law (which, for an A.I., memorizing every single case ever adjudicated is a trivial task). When I look at this progression, achieving an error rate far lower than human lawyers (or almost any other knowledge worker), and approaching zero, doesn’t seem at all out of reach.

Now, DALL-E-3 remains limited and imperfect, even in this new form. The image above, for example, while striking, jumbles up pieces of a courtroom in a non-linear way. Why is there a gavel on the podium where the lawyer is presenting? Where is the rest of the jury and why aren’t they sitting in a traditional jury box? These are pretty darn basic mistakes that no human artist worth their salt would make. And, I must confess, I tried several versions of the prompt, picked the best of the results, and this is the best it was able to do.

It is also very hit or miss when it comes to detailed and exact prompts, where I’m clearly looking for something very specific. Here’s one I tried a bunch of versions of and I’d have to say it’s basically a failure:

I’d say any competent human reader could understand what I was looking for here—the old man is only visible in the droplets of spray, as if the droplets were a portal into an alternate reality, so we only see pieces of him. He’s not literally also in the room, which clearly violates the “alone in his bedroom” part of the prompt. That level of specificity is just not something the A.I. can handle at this point, either because its language-processing is not advanced enough or because its training data doesn’t contain relevant examples.

What you really need here—which I’d heard rumors was going to be in DALL-E-3 and which I was disappointed was not included—is the ability to iterate, meaning to get an image, then give the A.I. instructions for how to change the image, and then have it go back and do another version rather than starting fresh with a whole new image.

This sort of back-and-forth is clearly possible. ChatGPT does exactly this sort of thing. I’m not sure exactly why they didn’t add that functionality. My best guess would be that generating these images is expensive (relatively, when you have millions of people generating) and so they want to spread the budget they have wide rather than deep. That’s certainly the reason why there’s a weekly generating limit. I’m hopeful for future versions, though, because that would unlock whole new levels of creation.

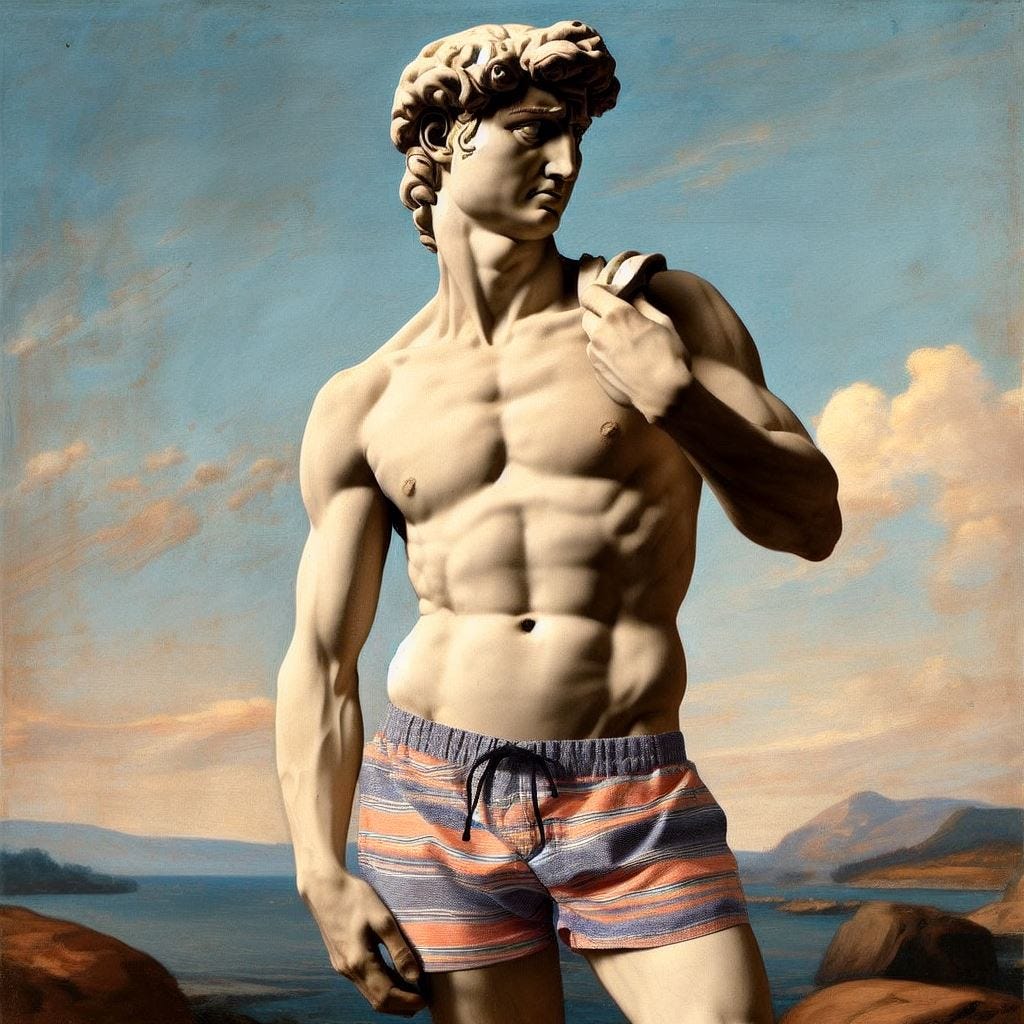

Despite these quibbles, DALL-E-3 is really, really impressive. And of course, the internet hivemind has gone much farther than I have alone in exploring its capabilities. @PackyM on Twitter appears to have discovered that it loves America:

Here’s a Twitter thread with a bunch of incredible images that I’m not going to repost directly here because a lot of them express a worldview I don’t agree with, and a few are just antisocial. But on a technical level, they are absolutely spectacular. They were mostly culled from 4Chan, which should contextualize them for people who know what that is. If you don’t know what that is, good for you, never go there.

This post has no natural endpoint. The whole thing about generative A.I. is that it floods the zone with everything imaginable. If you want to see more, the internet is bursting with it already, and it’s only going to get moreso. But that’s enough from me, for now.

The main takeaway here is the pace of improvement. You don’t have to be a futurist to extrapolate just a little ways and see the world changing. Heck, just its effect on the 2024 elections could be profound and is at this point underrated. Get ready, people!

END

Thanks as always for reading! If you enjoyed this post, please help me out by liking, commenting, or sharing with others. I hope everyone has a great week, and I will be back next Sunday with another original story.

You explain the improvements well, supplemented by the Dall-E-2 vs. Dall-E-3 pictures. Amazing stuff.